As the data grows in relational databases (new business requirements, managing audit data etc), the cost associated with the disk systems to store that data and the resources required to manage them becomes vital.

Hence it is necessary to adopt techniques like compression, de-duplication etc to reduce the storage costs.

Lets go one by one of compression techniques and usage, I will be discussing the de-duplication in length in another post.

Types of Compression

|

Physical Compression |

Logical Compression |

External Compression |

|

Hardware Assisted Block/Page Level |

Segment Level Row Level TDE Binary Compression |

Use custom algorithm for ex: CLEMCOM etc for mainframes |

What is compression for Any RDBMS?

-Data is compressed when it is written to block – Not true any more!

-Decompressed when it should read from the block

- -Basically a arithmetic algorithm’s to shorten or simplify your data

- Huffman’s

- CLEAMCOM

- Swarm

- Less data storage to hold the compress the data

- More cpu to compress and decompress data

- By removing redundant data at page/block level

-Requires

-Primary Functions on

Oracle’s journey thus so far…

Oracle Database 8i:

- Index compression introduced (Key Factoring)

- Table compression introduced (batch/Basic) only)

- RMAN: Unused block compression

- Data Pump (export/import): Metadata compression

- Lob compression – utl_compress

- Structured/relational data segment compression (DML/Batch) (Heap/IOT/Index/External/Materialized views)

- Unstructured data compression (Lobs) (SecureFiles)

- RMAN: Binary Compression for backup data

- Data Pump: Expanded export file compression capabilities

- Data Guard: Redo Transport Services – Network compression

- Hybrid Column Compression – (Exadata only)

- Archive Log Compression (Surprise ????)

Oracle Database 9iR2

Oracle Database 10g

Oracle Database 11g

Oracle Database 12c?

- Some Terminologies used in Compression techniques:-

Symbol Table – Dictionary Table (IBM/SQL etc)

- Static, Table wide dictionary approch

- Due to global in nature can yield higher compression factors

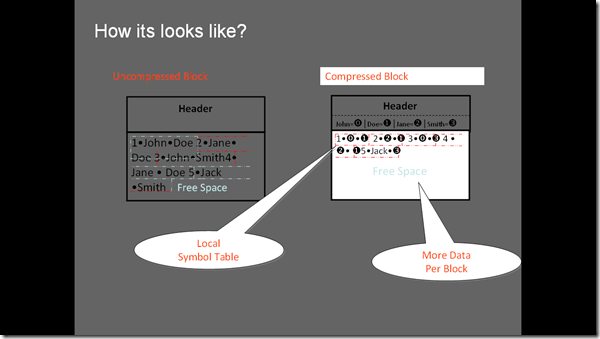

- Compressed blocks contain a structure called a symbol table that maintains compression metadata. The symbol table is stored as another table in the block. Each column in a row in a block references back to an entry in the symbol table in the block

- Duplicate values are eliminated by first adding a single copy of the duplicate value to the symbol table when a block is compressed. Each duplicate value is then replaced by a short reference to the appropriate entry in the symbol table.

- This makes compressed data self-contained within the database block as the metadata used to translate compressed data into its original state is contained within the block.

- Multi-Column Compression If a sequence of columns are having common data, then instead of saving each individual column as separate entry, the combination of the columns will be saved in the symbol table as a whole. This approach is particularly useful in case of materialized views using grouping sets and cubes (where the data is ordered).

- Cross-Column Compression As explain above, the symbol table holds the common data. This is true for the column values for different columns. So, if column values from the different columns are different, they all will share the same symbol table entry.

- Thresholds or factor that yields the benefit then only starts

Symbol Table (Oracle’s) – Local (Row level compression)

Compression factor

Differences between a Global Dictionary Vs. Symbol tables

|

Dictionary Table |

Symbol Table |

|

Static |

Dynamic |

|

Global to Table (stores in dictionary) |

Local to Block |

|

Benefits Uniform – Static Data |

Benefits to dynamic data |

|

User created |

System created |

|

Multiple I/O – Decrease buffer cache efficiency |

Single I/O – Increase buffer cache efficiency |

|

Repeats entries in dictionary |

Since its local no repeats |

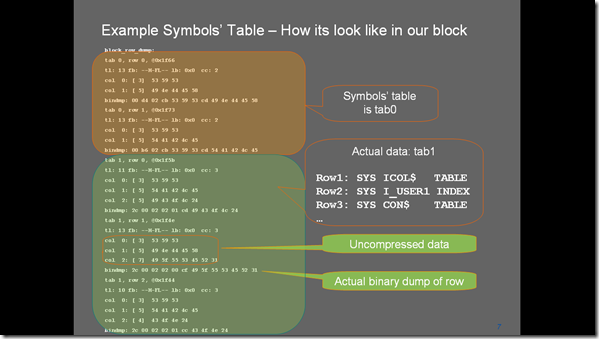

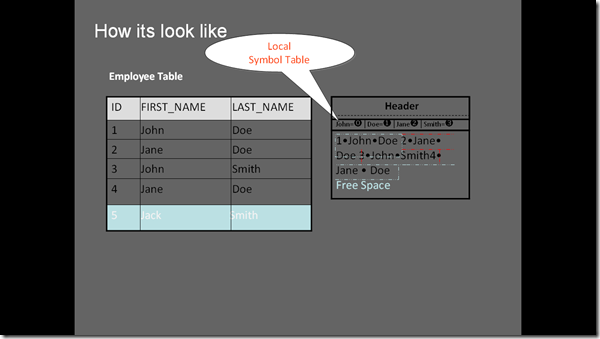

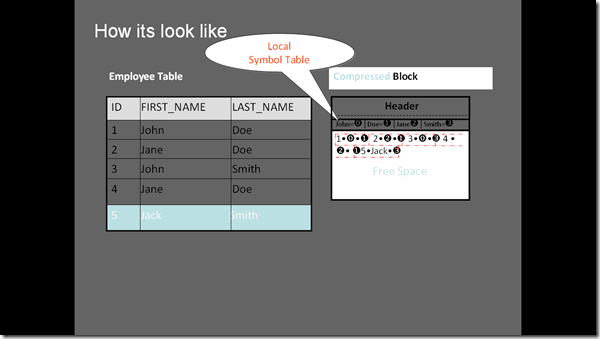

Example of Symbol Table: Taxonomy of a compressed block

If you dump a block that is compressed by any oracle methods above, it looks like

As updated here the repeated values stored are copied to symbol table (i.e tab 0) and points to original row place or reference.

Oracle Compression Methods:-

OLTP Table/Row Compression

Basic Compression

- Insert /%APPEND%/

- Direct Load operations kicks in

OLTP Table Compression Syntax:

CREATE TABLE emp (

emp_id NUMBER

, first_name VARCHAR2(128)

, last_name VARCHAR2(128)

) COMPRESSFOR ALL OPERATIONS;

Direct Load Compression Syntax (default):

CREATE TABLE emp (

emp_id NUMBER

, first_name VARCHAR2(128)

, last_name VARCHAR2(128)

) COMPRESS[FOR DIRECT_LOAD OPERATIONS];

Exadata

Create table comp_table

Compress for QUERY [LOW|HIGH]

Compress for ARCHIVE [LOW|HIGH]

as select * from MY_UNCOMP_TABLE;

What is block Level Compression and how does Oracle works?

Compression is applied at block level

Blocks will only be compressed if

- data is sufficiently large to fill the block

- rows have low enough cardinality

Columns will be reordered within each block to achieve optimal compression ratios

A segment may contain

- compressed and uncompressed blocks (when compression has added later to that table)

- blocks compressed on different columns

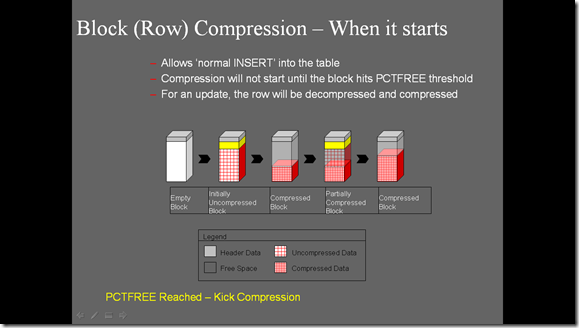

Block (Row) Compression – When it starts

- Allows ‘normal INSERT’ into the table

- Compression will not start until the block hits PCTFREE threshold

- For an update, the row will be decompressed and compressed

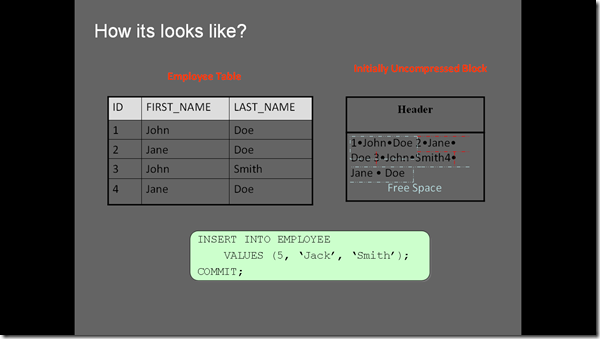

Compression in Action:-

For an Uncompressed block, there will be no symbol table , its just look like

For an compressed block,there will be a symbol table, here we have rows with names , John, Doe, Jane, Smith, where John, Doe, Jane values are repeated in the block. A symbol table will be created with the count of their rows, see next two slides

See below the original data has been replaced with symbols , and the original data is stored i.e the values Jane,Doe,Smith etc .

Now see the free space, that is has accumulated.

Advantages of Block/Row/Table Level Compression

Disk Space saving:

This is the obvious reason for implementing data compression. Although the space saving comes at a cost of decreased query performance (time required to decompress the data), when used along with other features, this can be minimized.

Coexisting compressed and non-compressed data:

The same table can have both compressed and non-compressed data. This is possible because the compression is at block level, one block might hold compressed data and the other block might hold normal uncompressed data. This is particularly useful with partitioning where you can compress the old partitions while keep the current partition as non-compressed.

Less Buffer Size required:

The data from a compressed block is read and cached in its compressed format and it is decompressed only at data access time. As the data is cached in compressed form, more data can be hold into the same

amount of buffer cache.

Trade-offs of Compression:-

Not an free – License is required (except Basic 9i method) , see how much you are saving before to use, since price is high

Do not capacity it while comparing to disks – rather look into max IOPS

RAM Demands: Since many blocks now demand and want more time to be in buffer

Must know which is suitable under available options

–Performance impacts

–Resource availability

Another compression at files, Secure Files (Lobs) – Compression

SecureFiles is a new 11g feature designed to break the performance barrier keeping file data out of databases

Next-generation LOB

- Superset of LOB interfaces allows easy migration from LOBs

- Transparent deduplication, compression, and encryption

- Leverage the security, reliability, and scalability of database

- Single security model

- Single view of data

- Single management of data

- Scalable to any level using SMP scale-up or grid scale-out

Enables consolidation of file data with associated relational data

Secure Files Syntax

•SecureFiles Deduplication Syntax

CREATE TABLE images (

image_id NUMBER,

image BLOB)

LOB(image) STORE AS SECUREFILE

(TABLESPACE lob_tbs DEDUPLICATE);

•SecureFiles Compression Syntax

CREATE TABLE images (

image_id NUMBER,

image BLOB)

LOB(image)STORE AS SECUREFILE

(TABLESPACE lob_tbs COMPRESS);

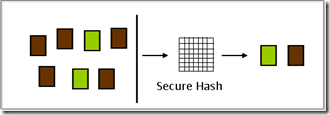

SecureFilesDeduplication:-

You can see that a Brown and Green (files/pages) has been replaced with single but a hash chain has built replacing or eliminating the redundant physical files.

You can see that a Brown and Green (files/pages) has been replaced with single but a hash chain has built replacing or eliminating the redundant physical files.

- De duplication is the mechanism to eliminate redundant physical files

- Enables storage of a single physical image for duplicate data

- Significantly reduces space consumption

- Dramatically improves writes and copy operations

- No adverse impact on read operations

- –May actually improve read performance for cache data

- Duplicate detection happens within a table, partition or sub-partition

- Specially useful for content management, email applications and data archival applications

Data Pump Compression

- Metadata compression available since Oracle Database 10g

- Oracle Database 11g extends compression to table data during exports

- Single step compression of both data and metadata

–No need to decompress before import

–Compressed data directly hits disk resulting in reduced disk space requirements

- –Internal tests reduced dump file size up to 75%

- Compression factor comparable to GNU gzip utility

- Application transparent ,

- COMPRESSION = ALL|METADATA_ONLY|NONE

Complete Data Pump functionality available on compressed files

Caveats

Performance cost: ~10% overhead

Compression Ratio: comparable to gzip

Backup Compression

Compression features in the RMAN utility:

- Since inception: Null compression – does not backup data blocks that have never been allocated

- Since Oracle Database 10g Release 2: Unused block compression – RMAN skips blocks that currently do not contain data

- Since Oracle Database 11g: Binary compression (or backup set compression) – applies a compression algorithm to the blocks as they are backed up, if configured/requested

- All RMAN compression techniques are totally transparent during restore operations

Fast RMAN Compression:-

- Compresses the backup set contents before writing them to disk or tape

- No extra decompression steps are required during recovery when you use High performance,

- industry standard compression algorithm

- 40% faster backup compression versus Oracle Database 10g

- Suitable for fast, incremental daily backupsReduces network usage

Examples

RMAN> CONFIGURE DEVICE TYPE DISK BACKUP TYPE TO COMPRESSED BACKUPSET;

followed by ..

RMAN> CONFIGURE COMPRESSION ALGORITHM ‘HIGH’;

RMAN> CONFIGURE COMPRESSION ALGORITHM ‘MEDIUM’;

RMAN> CONFIGURE COMPRESSION ALGORITHM ‘LOW’;

RMAN> CONFIGURE COMPRESSION ALGORITHM ‘BASIC’;Or

11gR1:

RMAN> CONFIGURE COMPRESSION ALGORITHM 'BZIP2';

11gR2:

RMAN> CONFIGURE COMPRESSION ALGORITHM 'BASIC';

Or for both, reset to default:

RMAN> CONFIGURE COMPRESSION ALGORITHM CLEAR;Watch out for Trade-offs for each type: However the following gives overview of those

LOW – corresponds to LZO (11gR2) – smallest compression ratio, fastest

MEDIUM – corresponds to ZLIB (11gR1) – good compression ratio, slower than LOW

HIGH – corresponds to unmodified BZIP2 (11gR2) – highest compression ratio, slowest

BASIC (which is free) – corresponds to BZIP2 (10g style compression) – compression ratio in the range of MEDIUM, but slower

Oracle Network Compression

Fast re-sync of standby database after network outages

Lower bandwidth networks (<100Mbps)

- 15-35% less time required to transmit 1 GB of data

- Bandwidth consumption reduced up to 35%

High bandwidth networks (>100 Mbps)

- Compression will not reduce transmission time

- But will reduce bandwidth consumption up to 35%

The COMPRESSION attribute is used to specify whether redo data is transmitted to a redo transport destination in compressed form or uncompressed form when resolving redo data gaps.

The following example shows the COMPRESSION attribute with the LOG_ARCHIVE_DEST_n parameter.

- LOG_ARCHIVE_DEST_3='SERVICE=denver SYNC COMPRESSION=ENABLE'

- LOG_ARCHIVE_DEST_STATE_3=ENABLE

- What else

- Heard (I have only heard) there is a feature in 10g partially developed and still partial in 11g , that archive’s can be compressed.

CAUTION: DO NOT SET IN BUSINESS SYSTEM IT WILL CRASH THE DB, though this works in 10g,11g, do not set especially below 11.2.0.2

–TO ENABLE THE COMPRESSION THE FOLLOWING COMMAND IS ISSUED

SQL> ALTER DATABASE ARCHIVELOG COMPRESS ENABLE;

–TO DISABLE THE COMPRESSION THE FOLLOWING COMMAND IS ISSUED

SQL> ALTER DATABASE ARCHIVELOG COMPRESS DISABLE;

Interested? Search for an undocumented parameter _log_archive_compress_enable

Interested to use Oracle Compression, wait, before to that read this table, which attracts license which does not will give you fair idea.

|

Advanced Compression License Required |

Name |

Description |

|

Y |

Backup ZLIB Compression |

ZLIB compressed backups are being used. |

|

N |

Backup BZIP2 Compression |

BZIP2 compressed backups are being used. |

|

N |

Backup BASIC Compression |

BASIC compressed backups are being used. |

|

Y |

Backup LOW Compression |

LOW compressed backups are being used. |

|

Y |

Backup MEDIUM Compression |

MEDIUM compressed backups are being used. |

|

Y |

Backup HIGH Compression |

HIGH compressed backups are being used. |

|

Y |

SecureFile Compression (user) |

SecureFile Compression is being used |

|

N |

SecureFile Compression (system) |

SecureFile Compression is being used by system users |

|

Y |

SecureFile Deduplication (user) |

SecureFile Deduplication is being used |

|

N |

SecureFile Deduplication (system) |

SecureFile Deduplication is being used by system users |

|

Y |

HeapCompression |

Heap Compression is being used And in DBA_TABLES.COMPRESSED_FOR column has one of the following values:- - FOR ALL OPERATIONS,- OLTP,- QUERY LOW,- QUERY HIGH,- ARCHIVE LOW - ARCHIVE HIGH |

|

Y |

Hybrid Columnar Compression |

Hybrid Columnar Compression is used |

|

Y (Conditional) |

Data Guard |

Data Guard, a set of services, is being used to create, maintain, manage, and monitor one or more standby databases. ( With “Compression used: TRUE” in FEATURE INFO column. ) |

|

Y (Conditional) |

Oracle Utility Datapump (Export) |

Oracle Utility Datapump (Export) has been used. ( With “compression used: >0” in FEATURE INFO column. ) |

|

Y (Conditional) |

Oracle Utility Datapump (Import) |

Oracle Utility Datapump (Import) has been used. ( With “compression used: >0” in FEATURE INFO column. ) |

This post can also be downloaded as Presentation: Click here to download

-Thanks

Geek DBA

Follow Me!!!